langchain 很多例子里面,默认都是调用的OpenAI的模型,但是有时候我们希望使用自己本地的大模型。具体代码如下:

from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline

from langchain import LLMChain,HuggingFacePipeline,PromptTemplate

import torch

model_path = "写入模型存在路径"

device = torch.device("cuda:0")

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True, device_map="auto").half()

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_length=512,

top_p=1,

repetition_penalty=1.15

)

llama_model = HuggingFacePipeline(pipeline=pipe)

template = '''

#背景信息#

你是一名知识丰富的导航助手,了解中国每一个地方的名胜古迹及旅游景点.

#问题#

游客:我想去{地方}旅游,给我推荐一下值得玩的地方?"

'''

prompt = PromptTemplate(

input_variables=["地方"],

template=template

)

chain = LLMChain(llm=llama_model, prompt=prompt)

print(chain.run("天津"))注意:

这行代码一定要写成 device_map="auto"

AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True, device_map="auto").half()

如果代码写成.cuda(),具体如下

AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True, ).half().cuda

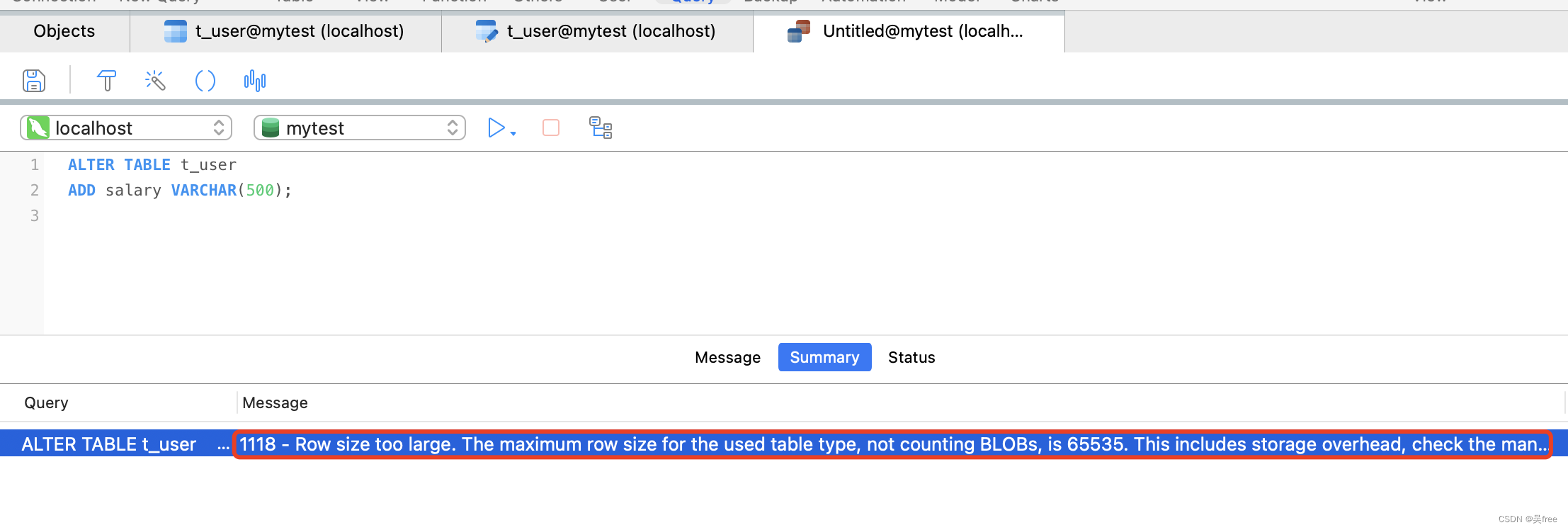

会报错:

RuntimeError: Expected all tensors to be on the same device, but found at least two devices.

或者

warning :

You are calling .generate() with the input_ids being on a device type different than your model's device. input_ids is on cuda, whereas the model is on cpu. You may experience unexpected behaviors or slower generation. Please make sure that you have put input_ids to the correct device by calling for example input_ids = input_ids.to('cpu') before running .generate().

![[前端]NVM管理器安装、nodejs、npm、yarn配置](https://img-blog.csdnimg.cn/direct/47fee072ad924dcb8722a584cf7ae6f4.png)